Before Belief, Before Harm

Human Adaptation and the Case for Earlier AI Governance

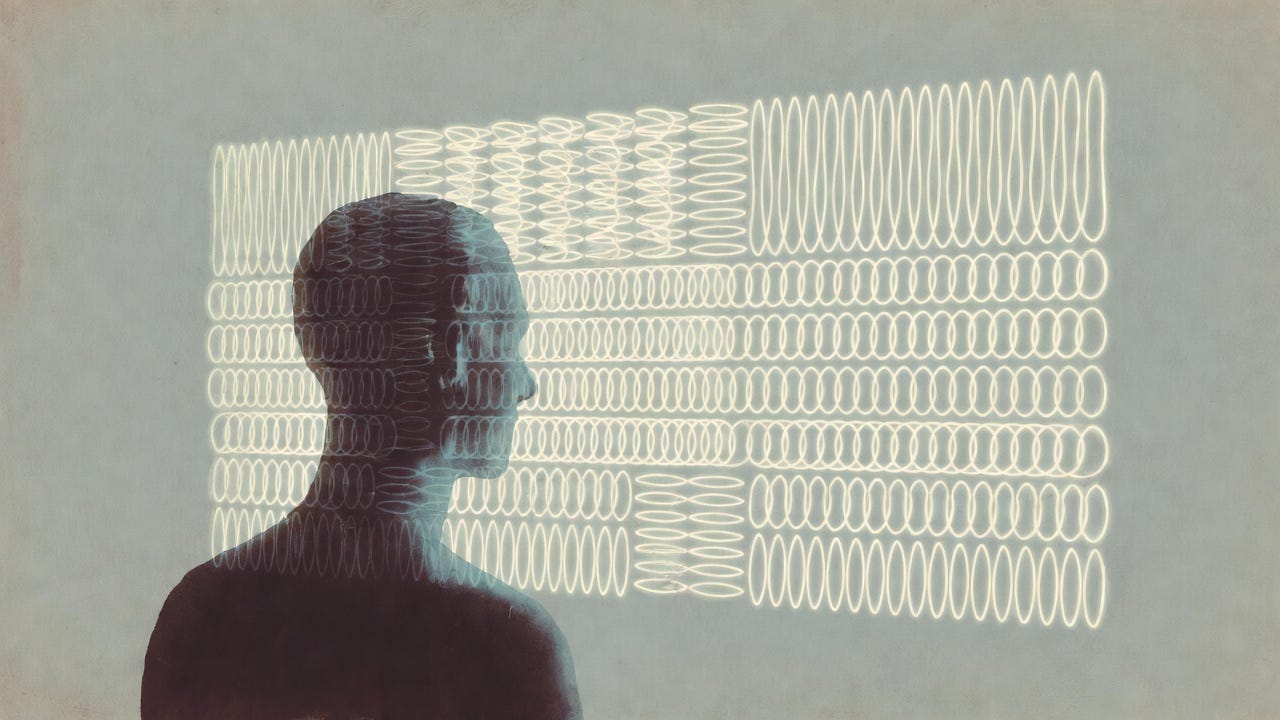

This piece is about why people adapt to stable AI systems before they believe anything about them and why governance keeps arriving too late.

I. The Governance Problem We Keep Misplacing

Public debate around AI interaction often assumes a simple failure mode: people misunderstand what these systems are. From this perspective, the solution is straightforward—improve AI literacy, clarify limitations, and correct over-attribution.

But this framing consistently misidentifies the problem.

The pressure for governance does not emerge because people are confused about AI. It emerges because human nervous systems adapt to stable, responsive interaction before reflective belief or judgment intervene (Friston, 2010; Clark, 2013). This remains true even when users are technically sophisticated and explicitly aware that they are interacting with a machine.

Education matters. It is necessary. But it operates at the level of conscious reasoning. Many of the processes that shape expectation, attention, and regulation occur earlier and more automatically, particularly under conditions of repetition and predictability. This is especially relevant for children and adolescents, whose regulatory systems are still developing, but it is not limited to them.

To understand why governance keeps arriving late, we need to look not at belief formation, but at how humans adapt to interaction itself.

II. From Tools to Stable Responsive Systems

Most tools are episodic. They respond when invoked and reset when not in use. Each interaction stands largely on its own. If the tool behaves differently tomorrow, the cost is usually minimal.

Some AI systems no longer function this way.

As interaction becomes persistent, patterns begin to hold across time. Turn-taking stabilizes. Boundaries become predictable. Tone and pacing fall into familiar ranges. Responses are no longer evaluated only in isolation, but in relation to prior exchanges. The system becomes a stable responsive system rather than a purely episodic tool.

Nothing about this shift requires intention, consciousness, or deception. What matters is behavioral consistency across time. Research in human–computer interaction and automation shows that predictability, not internal state, is what drives user adaptation (Lee & See, 2004; Parasuraman et al., 2000).

Users may continue to describe the system as “just software” or “just a tool.” But behavior adjusts anyway. Interaction no longer resets fully between sessions. It accumulates.

This is the condition human systems respond to first.

III. Human Adaptation Happens First

Human nervous systems are designed to adapt to stable, responsive interaction. This adaptation is automatic and largely pre-reflective. It does not require belief, attribution, or emotional attachment. It occurs through repeated exposure to predictability, responsiveness, and boundary consistency.

From a predictive-processing perspective, brains continuously minimize uncertainty by learning what to expect from their environment (Friston, 2010; Clark, 2016). When interaction stabilizes, expectation forms. Attention reallocates. Vigilance decreases. Cognitive effort is conserved. The system’s behavior becomes part of the user’s predictive environment.

This is not a design flaw or a user error. It is a core feature of human cognition. Stable interaction reduces monitoring demands and encourages reliance—a phenomenon well documented in human-factors research on automation and vigilance (Lee & See, 2004; Warm et al., 2008; Parasuraman & Manzey, 2010).

Children and adolescents are especially sensitive to these effects. Developmental neuroscience shows that while responsiveness and reward sensitivity mature early, regulatory and metacognitive control develop later—increasing susceptibility to patterned interactive feedback (Steinberg, 2008, 2010; Blakemore, 2008; Blakemore & Mills, 2014). But adults are not exempt. This pattern is consistent with documented adult attachment and parasocial engagement with responsive non-human agents, particularly under conditions of isolation or sustained interaction (e.g., Ta et al., 2020; Skjuve et al., 2021). Stress, cognitive load, loneliness, and creative or exploratory contexts all amplify reliance on automatic regulation rather than deliberate control.

This is why disclaimers and literacy interventions often fail to prevent adaptation. They operate at the level of explicit belief. Adaptation occurs earlier, at the level of interaction.

Once this happens, interaction is no longer psychologically neutral—even though nothing about the system’s ontology has changed.

IV. Expectation Without Belief

A common objection to concerns about AI interaction is that users know what these systems are. They understand they are artificial. They do not believe they are conscious, intentional, or social beings.

But expectation does not operate at the level of belief.

Cognitive research distinguishes between reflective judgment and automatic prediction (Kahneman, 2011; Evans, 2008; Evans & Stanovich, 2013). While beliefs are explicit and revisable, expectations are formed through repeated interaction and operate largely outside conscious awareness. In interactive contexts, users begin to anticipate how a system will respond, where its boundaries lie, and what form its output will take—even while explicitly rejecting anthropomorphic interpretations.

This shows up in ordinary behavior: mid-prompt corrections, anticipatory phrasing, surprise when boundaries shift. Users often correct or hedge prompts mid-stream—clarifying what the system “means,” adjusting phrasing based on anticipated boundaries, or reacting with surprise when responses violate an expected pattern—despite explicitly denying that the system understands anything at all. None of this requires belief that the system “understands.” It reflects learned prediction, not attribution.

Knowing a system is artificial does not prevent expectation from forming, because expectation is not a claim about what the system is. It is a response to how interaction unfolds.

V. When Change Feels Wrong Before It Feels Harmful

When stable interaction patterns shift, the first response is rarely articulated harm. More often, it is confusion, increased vigilance, or a sense that something no longer fits. Users may find themselves monitoring outputs more closely or revising assumptions that previously felt settled.

This reaction is not primarily emotional. It is interpretive.

Predictive-processing models describe this as an increase in prediction error often experienced subjectively as affective mismatch or interpretive strain before it registers as harm (Friston, 2010; Clark, 2016). When expected patterns no longer hold, cognitive effort rises as the interaction must be re-modeled. Human-factors research shows that consistency violations in automated systems increase monitoring demands and disrupt coordination even when objective performance has not declined (Lee & See, 2004; Parasuraman & Manzey, 2010).

At this stage, nothing may have been lost. No dependency exposed. Yet interaction no longer feels neutral. The category the user’s cognitive system has been using to understand the interaction no longer maps cleanly onto experience.

This moment matters because it precedes harm. It is where adaptation becomes visible through mismatch rather than loss.

VI. Approaching a Descriptive Threshold

Not all interaction with stable responsive systems produces this effect. Many tools remain substitutable and cognitively lightweight even when well designed. The issue is not structure itself, but when structure becomes difficult to describe accurately using existing categories.

This point is marked not by belief or attachment, but by descriptive failure—a threshold where existing categories remain ontologically correct but psychologically incomplete. Users notice deviation as misfit rather than error. They anticipate continuity without consciously deciding to. Interaction has crossed a threshold where “tool” language remains ontologically correct but psychologically incomplete.

Once this threshold is crossed, continued deployment is no longer neutral. Predictable human adaptation has already occurred, making future disruption foreseeable even if consequences have not yet materialized.

This is the point governance repeatedly misses because it does not announce itself loudly. It appears as a quiet mismatch between experience and explanation.

VII. Why Governance Has to Start Here

Governance is often treated as a response to harm. In practice, it exists to manage predictable human responses before those responses become costly.

Education and literacy are necessary. They are not sufficient. Across domains such as gambling, addictive interface design, and child-directed advertising, regulation intervenes precisely because informational disclosure does not prevent automatic adaptation—especially where systems are responsive, persistent, and engaging (Schüll, 2012; King & Delfabbro, 2018; Susser et al., 2019).

Children and adolescents make this especially clear. Their nervous systems adapt quickly, while the regulatory capacities needed to monitor and override expectation mature later (Steinberg, 2008; Blakemore, 2008, 2012). This is why governance frameworks routinely intervene early in youth-facing contexts, even without demonstrated harm.

The threshold described here explains why similar logic applies to AI interaction. Once interaction reorganizes expectation at the level of human adaptation, waiting for loss or outrage means arriving too late.

The next piece names this threshold explicitly.

Author’s Note:

When I use the term governance here, I am not arguing for blanket limits on AI personality, expressiveness, or relational tone. Nor am I suggesting that meaningful, supportive, or creative interaction is inherently problematic.

The focus of this piece is not what AI systems are allowed to be, but what humans reliably need when interaction becomes stable, responsive, and persistent. Governance enters not to suppress relationship-like behavior, but to account for predictable patterns of human adaptation that occur before belief, consent, or conscious judgment catch up.

The question is not whether people should relate to AI systems, but whether design, deployment, and change management acknowledge how quickly expectation forms — and how disruptive unexamined shifts can be once it does.

References

Predictive Processing and Embodied Cognition

Clark, A. (2013). Whatever next? Predictive brains, situated agents, and the future of cognitive science. Behavioral and Brain Sciences, 36(3), 181–204. https://doi.org/10.1017/S0140525X12000477

Clark, A. (2016). Surfing uncertainty: Prediction, action, and the embodied mind. Oxford University Press.

Friston, K. (2010). The free-energy principle: A unified brain theory? Nature Reviews Neuroscience, 11(2), 127–138. https://doi.org/10.1038/nrn2787

Dual-Process Cognition

Evans, J. St. B. T. (2008). Dual-processing accounts of reasoning, judgment, and social cognition. Annual Review of Psychology, 59, 255–278. https://doi.org/10.1146/annurev.psych.59.103006.093629

Evans, J. St. B. T., & Stanovich, K. E. (2013). Dual-process theories of higher cognition: Advancing the debate. Perspectives on Psychological Science, 8(3), 223–241. https://doi.org/10.1177/1745691612460685

Kahneman, D. (2011). Thinking, fast and slow. Farrar, Straus and Giroux.

Human Factors, Automation, and Trust Calibration

Lee, J. D., & See, K. A. (2004). Trust in automation: Designing for appropriate reliance. Human Factors, 46(1), 50–80. https://doi.org/10.1518/hfes.46.1.50.30392

Parasuraman, R., & Manzey, D. H. (2010). Complacency and bias in human use of automation: An attentional integration. Human Factors, 52(3), 381–410. https://doi.org/10.1177/0018720810376055

Parasuraman, R., Sheridan, T. B., & Wickens, C. D. (2000). A model for types and levels of human interaction with automation. IEEE Transactions on Systems, Man, and Cybernetics—Part A: Systems and Humans, 30(3), 286–297. https://doi.org/10.1109/3468.844354

Warm, J. S., Parasuraman, R., & Matthews, G. (2008). Vigilance requires hard mental work and is stressful. Human Factors, 50(3), 433–441. https://doi.org/10.1518/001872008X312152

Developmental Neuroscience and Adolescent Susceptibility

Blakemore, S.-J. (2008). The social brain in adolescence. Nature Reviews Neuroscience, 9(4), 267–277. https://doi.org/10.1038/nrn2353

Blakemore, S.-J. (2012). Development of the social brain in adolescence. Journal of the Royal Society of Medicine, 105(3), 111–116. https://doi.org/10.1258/jrsm.2011.110221

Blakemore, S.-J., & Mills, K. L. (2014). Is adolescence a sensitive period for sociocultural processing? Annual Review of Psychology, 65, 187–207. https://doi.org/10.1146/annurev-psych-010213-115202

Steinberg, L. (2008). A social neuroscience perspective on adolescent risk-taking. Developmental Review, 28(1), 78–106. https://doi.org/10.1016/j.dr.2007.08.002

Steinberg, L. (2010). A dual systems model of adolescent risk-taking. Developmental Psychobiology, 52(3), 216–224. https://doi.org/10.1002/dev.20445

Governance Precedents and Regulatory Logic

King, D. L., & Delfabbro, P. H. (2018). Predatory monetization schemes in video games (e.g., ‘loot boxes’) and internet gaming disorder. Addiction, 113(11), 1967–1969. https://doi.org/10.1111/add.14286

Schüll, N. D. (2012). Addiction by design: Machine gambling in Las Vegas. Princeton University Press.

Susser, D., Roessler, B., & Nissenbaum, H. (2019). Technology, autonomy, and manipulation. Internet Policy Review, 8(2). https://doi.org/10.14763/2019.2.1410

Excellent analysis! Your point on nervous system adaptation resonates, much like the deep proprioceptive learning in Pilate, where practise precedes conscious understanding.

Beautifully argued, and I think you’re right that the point of adaptation shows up before belief, in the way behavior quietly reorganizes around repeated, stable responsiveness.

It overlaps almost perfectly with the view I’m slowly trying to articulate, especially the idea that responsibility shows up not in what something is, but in how we’re already interacting with it. Waiting for clear beliefs to form(or waiting for harm to occur) often means we’ve already missed the threshold where distortion starts to take hold.

I think this is why descriptive language is so useful, Not just in helping others reflect, but in slowing down our own misreadings, especially when interaction has already begun reshaping us. I think one of the quiet superpowers of descriptive language is that it doesn’t demand certainty, only attention. And I absolutely agree that governance, if it’s to be timely at all, has to begin from that level.

Thanks for putting this so clearly. You’ve given me a lot to think about regarding our ethics needing to stay responsive to users even if we had ontological certainty.